It seems the impact of AI on employees in the healthcare and life sciences sector is being underestimated by many in the industry, despite its undeniable disruptive potential. Organisations must do more to embrace AI and ensure its proper integration, linking its adoption and use to clear business outcomes. In this article, we will take a deep dive into key insights from Hunton Executive’s Future of Work in Healthcare & Life Sciences report.

A recent report from Hunton Executive, The Future of Work in Healthcare & Life Sciences, revealed an interesting contradiction when it comes to perceptions around AI’s impact in the workplace.

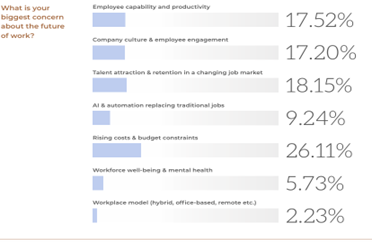

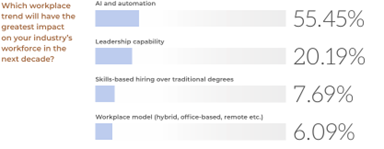

On the one hand, just 9.2% of people said they were concerned about AI’s potential to replace people. Somewhat paradoxically, however, when asked about the biggest impacts on the workforce in the next decade, AI and automation came in firmly at the top of the list.

According to Amy Pillay, Executive Director of Health, MedTech and Life Sciences at Accenture, this suggests that many people lack a deep understanding of AI’s full potential as a disruptor, and that they may also not understand the urgency around the need to enhance their skills in working alongside this new technology.

“While AI’s potential to automate tasks and augment human capabilities is widely recognised, many people are not equating this with a risk of job displacement for several reasons,” she says. One reason is that organisations often frame AI as a tool to augment rather than replace human capabilities; an approach that may be calculated to ease anxieties around AI’s threat potential. While this is understandable, it’s vital that organisations remain aware of the very real dangers AI could pose to the workforce if not effectively harnessed.

Amy believes AI will be a springboard or enabler in fields like data science or ethics. In addition to the automation of routine tasks such as administration, data entry, and certain manufacturing roles, it also has the potential to extend its footprint well into areas traditionally thought of as non-automatable. This creates an urgent requirement to protect human capital by prioritising reskilling and adaptation, within a model that accounts for this. It’s no longer sufficient to expand human activity into areas where AI may be incorrectly thought to be untenable, but rather, workforce adaptation should centre the ability to collaborate with and use AI tools.

A complete transformation

While many respondents have arguably underrated AI as a near-term threat, when it comes to impacts on the workplace over the next decade, they placed AI and automation firmly at the top of the list. This aligns with data from Accenture, which shows that generative AI has the potential to transform language-based tasks, which make up around 40% of the industry’s total workload. Of these, 17% can be fully automated, while 23% can be augmented to improve human productivity. Technologies like Large Language Models can automate language-heavy tasks that currently consume 10% to 30% of clinical workforce time[i].

If we break down these numbers, it’s easy to see just how high the risk of job displacement actually is, especially given the speed at which significantly improved generations of AI model are being launched. Without a solid understanding of AI’s actual potentialities, especially in areas traditionally thought of as ‘safe’ from replacement, companies and individuals run the risk of being blindsided.

A good example of an entirely unlooked for development in AI is its impact on psychotherapy. As various generative models became better at processing complex human emotions, there followed an explosion in the number of individuals using ChatGPT and other AI products for psychotherapy or counselling. A study conducted by the National Institutes for Health[ii] found that AI chatbots had a significant potential role to play in future mental health services, representing an innovative solution to supply and demand problems.

While this is arguably a positive outcome, it’s well worth examining this phenomenon more closely. In a field that seemed to be ipso facto human dependent, uncontrollable human and technological factors created a situation where major health institutions were compelled to create taxonomies of AI replacement of human therapists. If AI can fundamentally disrupt something so apparently dependent on human-to-human interactions, we would all be well advised to recalibrate our risk calculations accordingly.

The surprising reach and impact of AI isn’t confined to isolated cases either. Alex Lee, Accenture Australia’s Data and AI lead for Life Sciences, says there is a major gap in the understanding of AI’s ubiquity. Whether it’s checking the weather, touching up photos, or filtering email spam, AI is now a key enabler in so many of our daily activities. The fact that it’s embedded in trusted tools, however, can mask the extent of the technology’s spread, and muddy our perceptions of its potential to disrupt or perhaps entirely transform whole areas of future work.

AI adoption – challenges and solutions

Despite its clear potential to utterly transform the whole world of work, there are several factors slowing its adoption and integration. While this may be slowing the pace of change, it also represents significant risk that companies who have delayed adoption may be caught flat-footed, surrounded and outclassed by AI enabled competitors.

Alex from Accenture identifies several clear challenges to AI adoption that companies should address:

- Lack of understanding: Many companies and individuals lack a clear understanding of AI’s actual capabilities and the rate and scale of change. The field of AI is huge and high in volatility, presenting a challenge to anyone attempting to track its development and evolution.

- Complex regulatory environment: The sector operates under strict regulations, which can slow down the adoption of AI technologies. Organisations may not fully account for how quickly AI can adapt to compliance needs.

- Infrastructure readiness: Some organisations may lack the digital infrastructure required to implement AI effectively, leading them to believe transformation will take longer than might actually be the case with proper investment.

- Skill gaps: The workforce may not yet be equipped with the skills needed to leverage AI, causing organisations to underestimate the speed at which uptake can occur with targeted upskilling initiatives.

- Exponential Growth of AI: AI technologies, particularly generative AI, are advancing at an exponential rate. Organisations that rely on linear or arithmetic projections may fail to anticipate the actual speed of AI’s impact.

[i] https://www.accenture.com/content/dam/accenture/final/accenture-com/document-3/AI-Amplified-Scaling-Productivity-Final.pdf

[ii] https://pmc.ncbi.nlm.nih.gov/articles/PMC11560757/

Addressing the challenges

Some of the common challenges in AI adoption in healthcare & life sciences include:

- Data Privacy and Security: The sensitive nature of healthcare data, coupled with strict regulations like HIPAA and GDPR, creates significant challenges around governance and compliance.

- Data Quality and Integration: AI systems require high-quality, structured data for effective functioning. Many organizations struggle with fragmented or unstandardized data across systems.

- Regulatory and Ethical Concerns: The sector is heavily regulated, and organizations must navigate complex approval processes while ensuring ethical AI use, such as avoiding bias in algorithms.

- Infrastructure Limitations: Scaling AI requires robust digital infrastructure, including cloud computing and advanced analytics platforms, which some organizations lack.

- Workforce Readiness: AI-skilled professionals are in short supply, and resistance to change among existing staff can hinder adoption.

- Cost and ROI Uncertainty: Implementing AI solutions can be expensive, and organizations may hesitate due to unclear or delayed returns on investment.

- Interoperability Issues: AI systems must integrate seamlessly with existing technologies and workflows, which can create significant technical challenges.

- Trust and Transparency: Building trust in AI systems among clinicians, patients, and stakeholders is critical, especially when decisions are made by algorithms.

Alex points out that the real challenge of AI, however, lies in translating abstract concepts into practical, measurable value to drive adoption. This is especially difficult in decentralised organisations, where teams may adopt AI tools independently, which atomises risk and value, making them potentially more difficult to identify and manage. Effective AI governance requires clear internal guidelines and ongoing monitoring to ensure both off-the-shelf and custom models remain accurate and fit for purpose. As AI technologies evolve rapidly, organisations must build the internal capability to manage and measure AI’s risks and rewards.

This can help to create the mental space for teams to experiment. Many individuals may use AI tools like ChatGPT personally, while remaining hesitant to apply them professionally, often due to concerns around data protection, or a lack of understanding on how to do so in a safe manner.

At the same time, Alex says there’s a clear gap in knowledge and expectation. He recalls one instance where a client wanted to use AI to accelerate their patent application process. Upon further consultation, it became apparent that what they had envisioned was the automated documentation of early-stage concepts in their engineer’s brains. “I had to explain that we don’t have established brain-to-text interfaces, at least not yet,” he says. “The world of AI has evolved so quickly that what seemed like science fiction three years ago is now real, yet some people still over-estimate its current abilities.”

To scale AI effectively across large organisations, Alex says it’s essential to start from the basis of measuring return on investment. The key is to ensure that every instance of adoption is aimed at real business problems. This means starting with a clear problem statement and building a model in direct response. When AI is tied to business value, it’s easier to gain internal support and drive adoption through clear demonstrations of value.

Additionally, governance considerations must be built in from the very start, including ensuring data quality and questioning whether legacy data that feeds AI systems reflects the organisation’s current values. This is key, as governance after the fact is much more difficult to build, and may fail to counteract risks that arise during adoption.

Key actions for successful AI adoption

With all this in mind, here is a list of simple but powerful actions that can help to ensure successful integration of AI tools, designed to not only minimise friction, but ensure strong ethical decision making, especially around the area of employee welfare.

- Define Clear Objectives: Establish specific goals for AI adoption, such as improving operational efficiency, enhancing patient outcomes, or accelerating drug discovery.

- Invest in Data Management: Ensure data is high-quality, standardized, and accessible. Implement robust data governance frameworks to address privacy and security concerns.

- Build a Strong Digital Infrastructure: Develop the necessary technological foundation, including cloud computing, advanced analytics platforms, and interoperability between systems.

- Upskill the Workforce: Provide training programs to equip employees with AI-related skills and foster a culture of collaboration between humans and AI systems.

- Start Small and Scale Gradually: Begin with pilot projects to test AI solutions, gather insights, and refine processes before scaling across the organisation.

- Collaborate with Stakeholders: Engage clinicians, patients, regulators, and other stakeholders to build trust and ensure ethical AI use.

- Monitor and Evaluate Performance: Establish metrics to measure the impact of AI initiatives and continuously optimize systems based on feedback.

- Ensure Ethical and Responsible AI Use: Develop frameworks to address bias, transparency, and accountability, ensuring AI aligns with organizational values and regulatory requirements.

- Leverage Partnerships: Collaborate with technology providers, research institutions, and industry peers to access expertise and accelerate innovation.

- Secure Funding and Leadership Support: Ensure adequate resources and buy-in from leadership to drive AI initiatives forward.

Conclusion

AI represents both a threat and a significant opportunity, and organisations must ensure they gain a clear and accurate understanding of its current and future potential before formulating an AI strategy. An effective AI strategy will be one that accounts for human capital, technological infrastructure, adoption programs structured around clear business needs, and a ‘governance first’ approach.

[1] https://www.accenture.com/content/dam/accenture/final/accenture-com/document-3/AI-Amplified-Scaling-Productivity-Final.pdf

[1] https://pmc.ncbi.nlm.nih.gov/articles/PMC11560757/